Partial Taxonomy of 8-bit Floating-Point Formats

P3109, OCP OFP8 and challenges of small 8-bit FP formats

This blog post is not centered on RISC-V, it reviews recent developments in Floating-Point arithmetic around the standardization of small (8-bit) floating-point formats.

Initially published March 9th 2024, Updated March 10th 2024 to unify naming of dummy fp8 formats (Thank you to Al Martin for pointing out the discrepancies).

Introduction

Machine learning has extended the need for small floating-point formats. The huge computation need of ever growing neural networks associated with their ability to accommodate and exploit values with limited accuracy has renewed the interest for such small format with first 16-bit formats (binary16 a.k.a. half precision and BF(loat)16 a.k.a. Brain Float 16) and later even smaller formats. This interest has extended to 8-bit sizes. Similarly to what happened before the advent of the IEEE-754 standard, numerous incompatible 8-bit floating-point formats from different manufacturers and standard organizations have emerged.

This post considers some of the challenges associated with defining 8-bit floating-point formats and will review some of the existing answers to those challenges. We will see that many different formats already exist and that generally specifications limit themselves to defining one or multiple formats and conversion behaviors but do not specify a full set of arithmetic operations on those formats (yet). 8-bit floating-point formats seems to be mostly used to store neural networks parameters and activations, but actual layer computations are implemented in higher precision.

The post is organized as follows: the first section considers what would a 8-bit floating-point format derived from IEEE-754 look like. The next section presents the two Open Computer Project OFP8 formats and reviews some of their characteristics. The third section covers the IEEE P3109 workgroup interim report specifying another set of 8-bit formats. We finally present other proposals (not standardized to the best of our knowledge) and conclude.

This post is not exhaustive, we try to cover some of the 8-bit floating-point formats, in particular those supported by a standard organization group. For example, we do not cover in depth Graphcore’s formats while the company seems to have been quite active in defining and experimenting with smaller precision floating-point formats.

Defining 8-bit FP format(s) using IEEE-754

Let us first look at what a standard 8-bit floating-point format could look like. Here standard should be understood as derived from the IEEE-754 standard of floating-point arithmetic. This theoretical format definition (in fact multiple formats) is not used in practice but is useful to understand some of the compromises required when defining a good 8-bit floating-point format.

The IEEE-754 standard specifies floating-point (FP) format; including the widely used binary FP formats such as binary64 (double precision), binary32 (single precision) and binary16 (half precision). Although IEEE-754 rules do not allow for the definition of format smaller than binary16, they could theoretically be extended to define 8-bit formats (with varying exponent size).

For example a dfp8p<p> (dummy floating-point 8-bit) template format could be defined with p bits of significand: that is a m-bit mantissa and e-bit exponent with m=p-1 and e=8-p=7-m . The significand includes an implicit upper bit as IEEE-754 binary FP encodings. By construction e+p=e+m+1=8 (or e+m=7). As with any IEEE-754 format, dfp8 exponents are biased with b=2^(e-1)-1: an encoded (biased exponent) value of E represents a real exponent of E-b. The format contains special values (NaN and infinities, encoded by E=2^e-1), subnormal and zero values (implicit bit at 0, E=0) and normal values (implicit bit at 1, all other exponents).

This template could be instantiated with different precisions: example dfp8p4 with a 4-bit significand and a 4-bit exponent (and 3-bit mantissa). Similarly one could define dfp8p6 (2-bit exponent), dfp8p3 (5-bit exponent), dfp8p2 (6-bit exponent). The need for multiple 8-bit floating-point formats is magnified by the limited range of values of each format: multiple works have shown that defining more than one format was instrumental into making FP8 actually useful in real applications (see for example FP8 formats in deep learning).

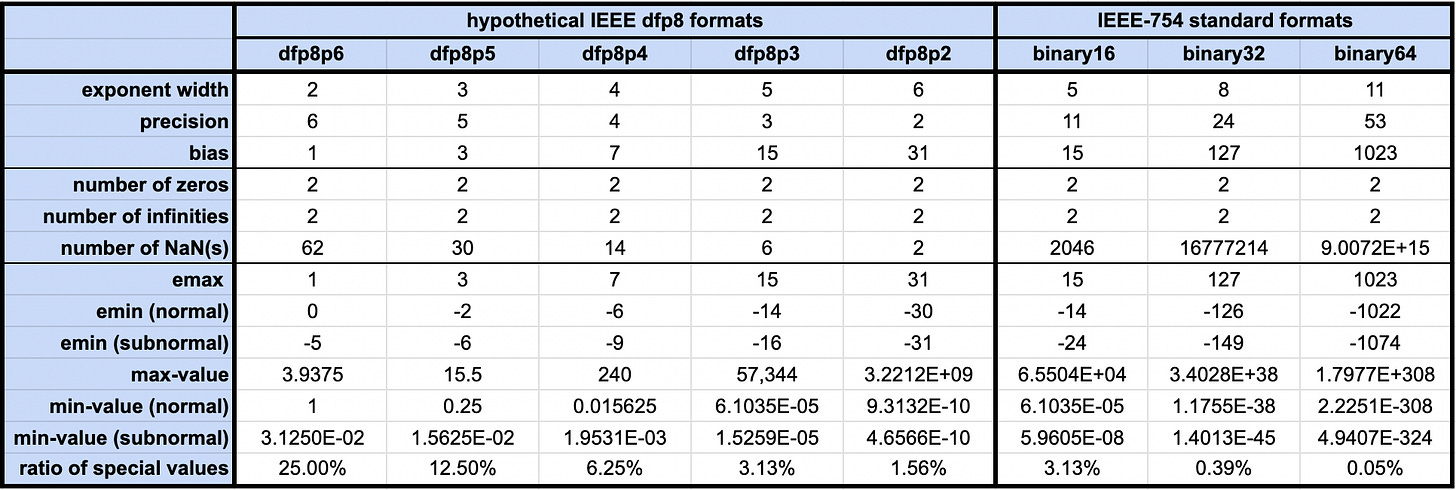

The table below lists key parameters and properties of 5 hypothetical dfp8 formats with exponent sizes ranging from 2 to 6 bits. Minimum and maximum values are reported as well as the ratio of the encoding space used by special cases (NaNs and infinities).

For such small format size (8-bit), IEEE-754 special values represent a large part of the encoding space. With a 3-bit exponent (dfp8p5), infinities and NaN occupy 1/8 (12.5%) of the space. This is due to the fact that IEEE-754 dedicate a full exponent value to special values (so a ratio of 1/2^e). This is negligible for wide standard formats but meaningful for 8-bit format. Wasting so much encoding spaces for those values does not seem worth it for 8-bit formats.

Even the two signed zeros (+0 and -0) occupy almost 1% (~0.78%) of the encoding space for dfp8 formats.

One interesting representation to visualize format dynamics is the dynamic range of its non special values. For visibility we have plotted the 5 theoretical dfp8 formats on a logarithmic scale with the horizontal axis representing the positive encoding values:

The larger the exponent width the wider the dynamic. We also see that for narrower exponents the special cases (infinities and NaN) start at an earlier index, illustrating the fact that a larger part of the encoding space is used by special values.

Note: all 5 dfp8 formats have the same encoding for 2.0: 0x040. This encoding has a zero mantissa (significand of

1.0) and a2^(e-2)biased exponent:

2^(e-1) - bias = 2^(e-1) - (2^(e-1) - 1) = 1

On the left hand side of the graph, we can see the subnormal ranges when the logarithmic plots seem to stop being linear. The size of these ranges increases when the mantissa size increases (the exponent size increases) as this size is equal to 2^(m-1).

This hypothetical definition of dfp8 formats appears inefficient: too many wasted encoding space (in particular for smaller exponent sizes). We will use those formats as a baseline to compare against for actual 8-bit formats being used in the wild (or proposed).

OpenCompute: two OFP8 formats

The Open Compute Project, or OCP for short, has defined its own set of 8-bit FP formats in a dedicated standard: OCP 8-bit Floating Point Specification (OFP8). This standard status is “approved” (since June 20th 2023) in its version 1.0.

The OCP defines itself as follows:

The Open Compute Project (OCP) is a collaborative community focused on redesigning hardware technology to efficiently support the growing demands on compute infrastructure.

The OFP8 specification was co-authored by people from NVIDIA, Intel, ARM, Google, AMD, and Meta (and some feedback was provided by AWS) so we can speculate that those companies are likely to adopt this specification. NVIDIA already implements it in their GPU tensor cores starting with the Hopper generation. This specification seems to be the one with the most diverse industry adoption at the moment.

The OCP document specifies two OFP8 formats: E4M3 and E5M2. The former has a 4-bit exponent and a 3-bit mantissa while the latter boasts a wide 5-bit exponent at the price of a narrower 2-bit mantissa, both formats have a 1-bit sign. Those formats differ from the dummy dfp8 formats we have defined previously.

From OCP specification:

The E5M2 format represents infinities and NaNs. Interpretation of the three mantissa values for NaNs is not defined. The E4M3 format does not represent infinities and uses only two bit patterns for NaN (a single mantissa-exponent bit pattern but allowing both values of the sign bit) in order to increase emax to 8 and thus to increase the dynamic range by one binade.

Note: a binade is the set of numbers in a floating-point format which share the same exponent value and emax is the maximum format exponent.

Let us plot the dynamic of the OPF8 formats compared to the theoretical IEEE dfp8 formats we presented in the previous section:

We see that OFP8 e4m3 and dummy IEEE-dfp8p4 overlap almost completely. This could be expected as they have identical mantissa and exponent widths. However e4m3 has a few more values near the maximum (thanks to the reduction of the number of NaN encodings compared to the theoretical IEEE formats). OFP8 e5m2 and our hypothetical dfp8p3 overlap completely with no noticeable differences.

This is visible in the table below which lists some of the key parameters and properties of the OFP8 formats alongside the values for the theoretical dfp8 formats.

OFP8 e4m3 only has 0.78% of its encoding dedicated to infinities and NaNs (with 0 encoding actually dedicated to infinities).

OFP8 e5m2 and dfp8p3 boast the same ratio of special value encoding: 3.13% and are identical (same exponent width, same precision, same bias, and same mix and max values).

Operations on OFP8 values

OCP specifications defines conversions from ‘“wider floating point types (IEEE binary32, binary16)“ with saturating mode and rounding mode (only round-to-nearest is mandated). During a conversion, the input value significand is first rounded to the destination format significand width. If the resulting value exceeds the maximum normal numbers then the result depends on the mode:

in saturating mode, the maximum normal value is returned (with input sign)

in non-saturating mode, the result depends on the format:

E4M3 format does not have any infinities. In non-saturating mode, values which fall outside the normal range are converted to NaNs.

E5M2: signed infinities

IEEE P3109: binary8p<n> formats

Another 8-bit floating-point standard is being developed by the IEEE. IEEE, or Institute of Electrical and Electronics Engineers, is famous for numerous standards used in computer architecture, including the IEEE-754 standard for floating-point arithmetic (initially published in 1985, revised in 2008 and 2019 and with a new revision underway for 2029). The specification process for the IEEE 8-bit floating point formats is owned by the IEEE P3109 working group. The group is sharing some public information on github https://github.com/P3109/Public.

In an interim report (updated February 24th 2024) we can see the current draft of the specification. P3109 defines 7 8-bit floating-point formats dubbed binay8p1 to binary8p7. p<n> describes the precision: n is the significand size (including the implicit bit). Hence binary8p<n> is encoded with a (n-1)-bit mantissa, a 1-bit sign and a (8 - n)-bit exponent.

The 7 formats share some characteristics:

a single zero value encoded as 0x00 (hexadecimal)

a single Not a Number, NaN, value encoded as 0x80

signed infinites: +Inf 0x7f and -Inf (0xff)

binary8p1 is somewhat special: it has a single bit of precision and no encoded mantissa (the significand of non-special values is always 1.0). It exponent bias is derived with the same rule as IEEE-754 standard format: 2^(exponent_width - 1) - 1 = 63. This format also does not contain any subnormal: all non-special values are normal numbers.

The 6 other formats define their exponent bias as 2^(exponent_width - 1), which mean it is typically even contrary to IEEE-754 (except when p=7).

Comparing the P3109 binary8p3 format (respectively binary8p4) to the OFP8 e5m2 (respectively e4m3) format we can see similar dynamics. However we can spot the difference in bias value: The P3109 format values are always below the corresponding OFP8 format by a constant logarithmic offset (for a given index, the OFP8 value is twice the P3109 value).

One thing to notice is that the smaller number of special values encoding for P3109’s binary8p3 compared to OFP8’s e5m2 allows the P3109 format to reach almost the same maximal normal value (although at a greater index) despite the larger bias value. This is not true for P3109’s binary8p4 compared to OFP8’s e4m3: the former maximal normal value is almost half that of the latter. The reason is that in that case both format maximal normal value have the same encoding but the larger P3109 bias decreases the max exponent compared to the OFP8 format.

The interim P3109 report contains the following table comparing the IEEE binary8p[3,4,5] formats to the OCP OFP8 formats, the AGQ (AMD, Graphcore and Qualcomm) and the TSL (Tesla) 8-bit floating-point formats.

Note: This table seems to contain a few inaccuracies (although it might be our own understanding which is incorect): from what we could find OCP OFP8 and Tesla’s CFloat8 formats do define negative zero values.

Currently the P3109 only defines classification and comparison operation on the binary8p formats. It does not specify conversions although there are mentioned in the document (as a concern for introducing infinities to allow multiple behaviors on overflowing conversions).

Other formats: Microsoft ms-fp(8-12), Tesla, …

Microsoft msfp(8-11)

At HotChips 2019 (HC31) Microsoft presented its project Brainwave which implements a set of formats called msfp8-11 (Microsoft’s slides). Brainwave is deployed on FPGAs . Microsoft MSFP formats described in this work are 8 to 11-bit wide, have a 1-bit sign, a 5-bit exponent and a 2 to 5-bit mantissa. The 2-bit mantissa version is a 8-bit floating-point format. It was demonstrated in a vector-matrix multiplication engine which inputs and outputs half precision values. The inputs are converted to MSFP formats and the outputs are converted back to half-precision. The focus was on computation efficiency rather than storage efficiency. This 5-bit exponent format can be seen as the 8-bit variant of what BFloat16 is for single precision: same exponent size and reduced mantissa width.

Microsoft msfp12

More recently, in a MSFP-12 blog post (and paper), Microsoft presented a block-floating point format composed of a 4-bit fixed-point number (1-bit sign and 3-bit mantissa) which is extended to a 12-bit floating-point number by an 8-bit exponent which is shared among multiple values (called the bounding box, and equal to 16 in the example presented in Microsoft’s post). This construction is not new, and was known as block floating-point, but proved very efficient for neural network inference workloads.

Graphcore fp8

Graphcore has been very active in the domain of small floating-point formats and their application to deep learning. Graphcore’s researchers Noune and al, introduced 8-bit floating-point formats in their paper 8-bit numerical formats for deep learning (June 2022, pdf on arxiv). Graphcore’s research team also published Training and inference of large language models using 8-bit floating-point (September 2023, pdf on arxiv).

Tesla CFloat8 (Configurable Float8)

Tesla configurable 8-bit floating-point formats, CFloat8, presented in Tesla Dojo Technology: A Guide to Tesla’s Configurable Floating Point Formats & Arithmetic. The guide is presented as a standard defining two formats: CFloat8_1_4_3 and CFloat8_1_5_2 with respective 4-bit exponent / 3-bit mantissa and 5-bit exponent / 2-bit mantissa. Rather than a fixed bias value, the bias is a configurable 6-bit unsigned integer value (64 bias values for each format). Both formats do not offer encoding for NaNs nor infinities but contain zero, normal and subnormal values.

The following plot compare the dynamic ranges of a few instances of Tesla CFloat8 format (with bias = 0,7,8,15,16,32, and 63) to OFP8 and P3109 formats.

As expected we have some significant overlaps (although not on the full encoding range because of different choices of special value encodings):

OFP8 e5m2 overlaps with CFloat8_1_5_2 with bias=15

OFP8 e4m3 overlaps with CFloat8_1_4_3 with bias=7

P3109 binary8p3 overlaps with CFloat8_1_5_2 with bias=16

P3109 binary8p4 overlaps with CFloat8_1_4_3 with bias=8

Thanks to the configurable bias value, CFloat8 formats offer a wider range of values overall. Moreover the absence of special values gives a few extra available encoding. The price to pay is that operations on NaN/infinities (from other formats) or invalid/overflowing operations result in a maximum value result which can make errors quite difficult to spot.

Huang’s Flexible Floating-Point 8-bit (FFP8)

Flexible Floating-Point 8-bit (FFP8) format presented by Huang and al. in All-You-Can-Fit 8-Bit Flexible Floating-Point Format for Accurate and Memory-Efficient Inference of Deep Neural Networks (pdf for Arxiv). This family of formats adds two extra parameter: bias value and sign. The sign can be omitted and the bias can become any integer values (no explicit size limitation). The authors justify this choice by the ability to adapt more closely to more number distribution. The configurable bias could be used to store a scaling factor but will need to be stored somewhere.

RISC-V support for 8-bit floating-point

As the focus of this blog is RISC-V, we had to insert a quick mention about RISC-V and support for 8-bit floating-point format(s). At the time of writing RISC-V ISA does not offer any standard support for any 8-bit floating-point format. The floating-point SIG (FP-SIG) is considering it as part of its gap analysis for the ISA. The group is discussing which of P3109 or Open Compute specification should be selected for RISC-V. If you want to be part of the discussion, feel free to join the SIG: https://lists.riscv.org/g/sig-fp.

Conclusion

Multiple FP8 formats have been proposed and at least two different standards/drafts exist (Open Compute OFP8 and IEEE’s P3109). IEEE P3109 working group document is not a final standard and may still evolve. Open Compute’s OFP8 standard has already reached the Approved state. OFP8 seems to be used by the most companies at the moment, including NVIDIA.

Numerous other proposals have been made and some are even used in production. All those proposals have in common to suggest the definition of at least two fp8 formats as it appears a single format is not enough to cover most use cases. It also looks like we are converging towards 4 and 5-bit exponents as the two formats with some variations in the presence and encoding of special values (infinities and NaN) and the exponent bias used. The bias difference translates into different extremal values. All formats are trying to minimize the ratio of encodings dedicated to special values; with some formats even omitting any special value encoding altogether.

We might consider Open Compute OFP8 and IEEE P3109 has the current two competing standards given the number of companies behind each of them (with some overlap). They have made different choices for bias value and encodings but none offer configurable bias contrary to some of the other formats used in the literature (e.g. Tesla’s CFloat8).

References:

Graphcore’s 8-bit numerical formats for deep learning, Noune and al. June 2022,

Intel, ARM, NVIDIA’s FP8 Formats for Deep Learning, Micikevicius et al. September 2022

OpenCompute: OCP 8-bit Floating Point Specification (OFP8), June 2023

IEEE-SA P3109 working group interim report (September 2023 - February 2024)

John D. Cook’s Eight bit floating-point

A Microsoft custom data type for efficient inference, Bita Darvish Rouhani et al. December 2020

Microsoft HotChips 31 (2019) slides: (Cloud) acceleration at Microsoft (mention of project Brainwave and msfp8-11 formats)

NVIDIA’s Using FP8 with Transformer Engine

Python scripts to generate (positive) values of various FP8 formats: fp8Formats.py

Graphcore’s Training and inference of large language models using 8-bit floating-point, Perez and al, September 2023

Microsoft’s Serving DNNs in Real-Time at Datacenter scale with project Brainwave, Chunk and al., March 2018

Fprox, nice article.

In the first section you call the hypothetical IEEE754 8-bit formats "dfp8", e.g. dfp8 e3m4. but in the subsequent sections you call them "binary8", e.g. binary8p5. The mapping between the two is fairly obvious, but could be somewhat confusing because you haven't defined what "binary8" is yet in the OFP8 table. Then in the following tables, there are binary8 columns in both the IEEE754 and the P3109 sections.